This is the new URL that we will be using to scrape. Navigate to this website and click on the E-commerce site.

We will make use of the different libraries and a parser to parse the different HTML contents and finally, we will have a look at how we can automate the process of searching the top items on a frequent basis based on a provided interval and will then write the output in a text file. We will also have a look at a few of the conditions that can be applied to return data only as per the provided conditions. We will scrape an eCommerce test website provided by a web scraper to fetch the different items from the site. In this blog, we will see in action the process of web scraping to fetch the required details. lxml parser: This parser deals well with unbroken HTML source code as well thus, is preferred to parse the HTML content over the default HTML parser.bs4 & BeautifulSoup4: We need to install these libraries to convert the fetched HTML content into the BeautifulSoup or python objects.In our case, it returns the HTML source code of a website. requests: the library allows us to send the API requests easily and efficiently and in-turn returns the required data.Required Python libraries for the process If a website consists of a CAPTCHA, then the web scraper will not work since CAPTCHA blocks all the automated software and robot access.If a website receives a large number of requests from a specific IP, the owner of the site can block that IP because of which the web scraper will not work.If the web page structure is complicated and dynamic, a web scraper might fail because one scraper is built for each site.If the URL of the website you wish to scrape does not return a response code of 200 since the owner of the website disallows scraping.Web scraping will not work because of the following challenges:

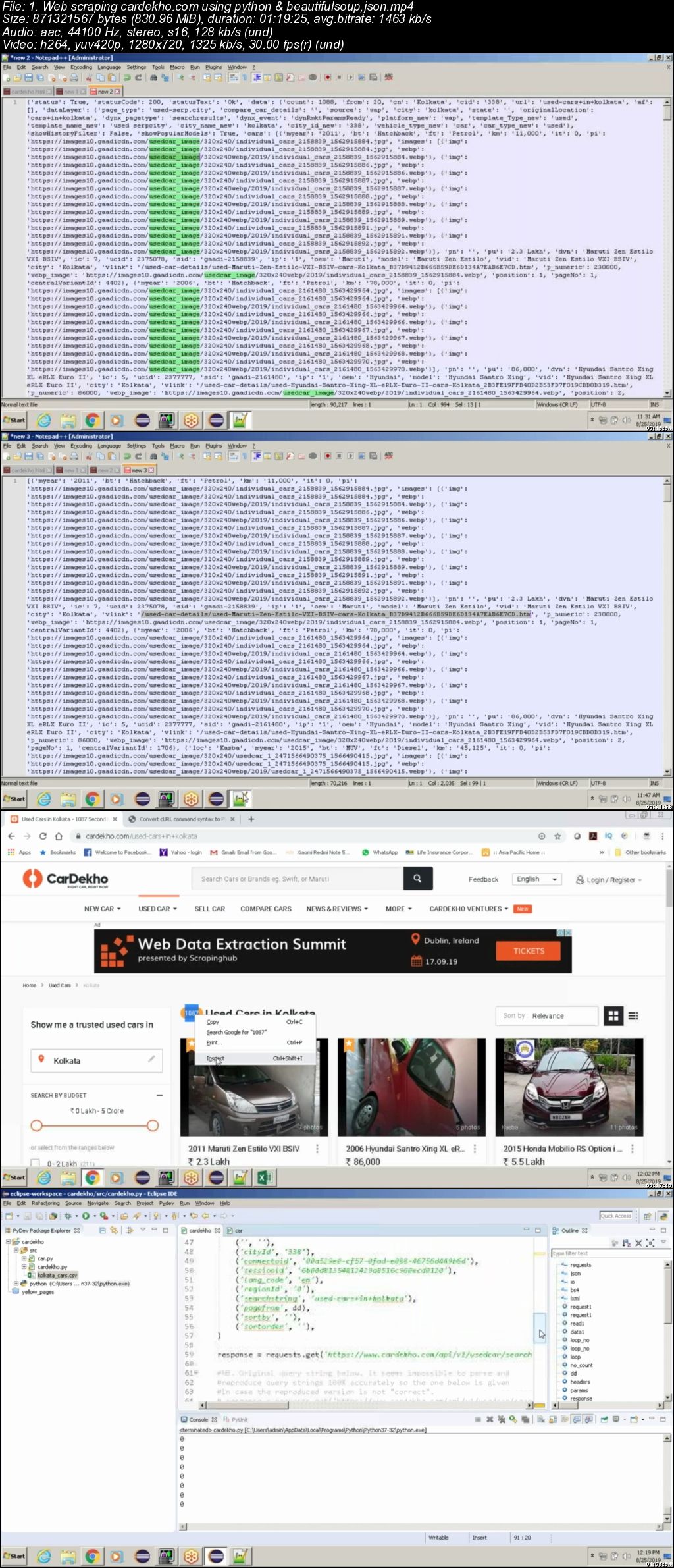

The output data can be stored in a normal text format or in an Excel sheet or CSV file or even in a JSON file. The scraper then extracts the required data from the HTML source code that is fetched to output the data in a user-defined format. For a web scraper to scrape a site, it first requires the URL of the site it needs to scrape.

It is an effective practice of defining the structure for what is required before beginning the process of scraping a website so that only the required information is pulled out. Web scraping helps us either retrieve all the data from a website or only the specific details required by a user. The method extracts huge chunks of data from various sites in an automated fashion and the majority of the returned data is in an unstructured HTML format that is in turn transformed into a structured format in a database before it is used in the various applications. It is majorly known to be an effective method for generating datasets for education purposes as well as it can also be used to scrape the required details from job sites to make it easy for us to search for jobs on a regular basis. Web Scraping is basically the process of collecting and altering huge chunks of data from a website using computer software. Required Python libraries for the process.We will also demonstrate step-by-step instructions on how to build a Web Scraper using Python. In this blog, we will explore Web Scraping, how it works, its challenges, and the Python libraries required for the process. Web scraping employs intelligent automation methods to collect thousands and millions of data sets in a lesser amount of time. But what if you need to extract a significant volume of data from a website as soon as possible? Copying and pasting will not work in this case! That’s when Web Scraping will come in handy. So, what exactly do you do? You simply copy-paste material from any website into your own document. For example, you need some data on Manhattan. Assume you need to extract some information from the web.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed